In-Game Visual Referee Challenge¶

In 2022 and 2023, the Standard Platform League held technical challenges on detecting human referee gestures. In 2022, twelve different gestures had to be detected. Eleven of them were static and one was dynamic, i.e. it included motion. A NAO robot standing on the center of the field looked at a referee standing at the end of the halfway line and had to detect a gesture the referee showed after he/she whistled. In 2023, another dynamic gesture was added and the whole challenge was conducted during all games of the preliminary rounds of the soccer competition (see this document, section 2). After the whistle for kick-offs, goals, and the end of a half, a gesture was shown the robots had to detect. In both challenges, we used a two-step approach for gesture detection. A neural network detects keypoints on the referee's body and then the spatial relations between some of these keypoints are checked to detect the actual gestures.

Keypoint Detection¶

MoveNet is used to detect 17 so-called keypoints in the images taken by the upper camera in NAO's head. Most of these keypoints are located on a person's joints, but some mark attributes of a person's face, such as ears, eyes, and the nose. The single pose version of MoveNet is used to detect these keypoints. The network delivers 17 triples of x and y coordinates and a confidence, one for each type of keypoint. There are two versions available that both use RGB images as input: The Lightning variant processes an input of 192x192 pixels and runs at around 13.5 Hz on the NAO. The Thunder version processes an input of 256x256 pixels and subsequently runs slower (3.5 Hz on the NAO). We used the latter version, because the detections proved to be more reliable.

A quadratic region around the center of the upper camera image is used as the input for the MoveNet network. The region has a fixed size of 384x384 pixels. It is scaled down to the required input size using bilinear interpolation. It is also converted from the YUV422 color space to the RGB color space MoveNet expects. Using self-localization, the NAO looks at the position the referee should stand on the field at the fixed height, thereby centering the referee in the region used for detection.

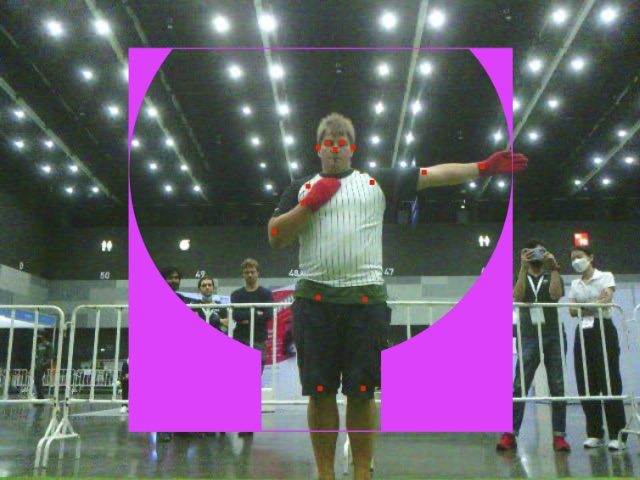

The single pose version of MoveNet is supposed to detect keypoints on a single person. It is not defined what will happen if several people are visible in the image. As there can be spectators present in the background during the execution of the challenge, we try to increase the likelihood that the actual referee is marked with the keypoints. In the part of the image that is used as input for the network, we define a mask to exclude regions where the referee could not be visible. The idea is that the network will prefer a person more of which is visible over one, e.g., the legs of which are hidden. The mask consists of a rectangular region representing the area where the legs of the referee could be and a circular region that represents the area the arms can reach. Everything outside these two regions is recolored to magenta. We also tried to color it black, which was less effective, probably because the network simply considers these parts to be in the shadow and still places keypoints into them. This setup was used for the detection from a fixed position in 2022. For the detection in actual games in 2023, the mask was also scaled based on the distance to the referee and the horizontal angle between the observing robot and the referee.

Gesture Detection¶

The gesture detection is performed by defining a set of constraints for each of the gestures. Each constraint defines valid ranges for the distance between two keypoints and the direction one of these keypoints is located relative to the other one. The constraints for all gestures are checked in a fixed order. The first one that satisfies all constraints is accepted. The ordering allows to use less constraints for gestures that are checked later. For instance, the gesture for goal consists of one arm pointing to the side and the other arm pointing at the center of the field, i.e. basically in the direction of the observing robot. MoveNet struggles with correctly placing the keypoints on the latter arm. Therefore, an arm pointing to the side is accepted as goal without checking the second arm if none of the other gestures was accepted.

Similar to the mask presented in the previous section, the keypoints are scaled based on the distance and the direction to the referee before the constraints are checked. To suppress noise and to avoid detecting intermediate gestures the referee goes through while lifting the arms, detections are buffered. A gesture is only accepted if at least 40 % of the detections within the last ten images are the same. A special handling was implemented for dynamic gestures, where different phases of these gestures are counted as the same gesture.

Behavior¶

At RoboCup 2023, the referee gesture detection had to be perform during actual games. A gesture was continuously shown 5–15 s after the referee whistled. The robots had to report the gesture they detected through the wireless network no later than 20 s after the whistle. The first network packet received from a team after the whistle was the one that counted. We used this setup to implement a coordination without communication between the robots of our team. Any robot that is not playing the ball and is located within a circular sector of 2.5–6 m and ±35° relative to the referee turns towards the referee. It then tries to detect the gesture. If it is not successful within 6 seconds, it will step sideways in the direction of the halfway line to get a different perspective. Robots closer to the halfway line will report their findings earlier than robots that observe the referee under a more acute angle, assuming that the detections of the latter are more error-prone. Gestures that rely on detecting a single arm are reported even later. In addition, robots that are not able to detect a gesture, even if they have not even tried to do so, report a default gesture shortly before the time window closes. The default gesture was selected to maximize the expected result for just guessing (~30 % of the achievable points). Part of the challenge is also to actually detect the whistle. In case it was missed, the robots receive the change in game state by the league's referee application 15 s after the whistle, which is still early enough to send a guessed gesture.

arms hard to detect.

Discussion¶

Although we won the challenges both in 2022 and 2023, our approach is not the one we would choose if the success in soccer games would actually depend on the detection of the referee gestures. On the one hand, MoveNet is designed to solve a more complex problem than just detecting a few predefined gestures, and therefore, it is unnecessarily complex and thus slow. On the other hand, it lacks the information that only these gestures should be detected. It would be better to detect a gesture as a whole and provide a confidence for the whole detection rather than for each individual keypoint, as MoveNet does. Lukas Molnar 1 uses such an approach. In 2023, our approach ran into some problems. To look at the referee, the NAO robots have to look up. As a result, the ceiling lights come into view. During the RoboCup in Bangkok, we basically used the whole image as input for the auto exposure of the camera, which worked fine (see image above). However, in Bordeaux, the ceiling lights were so strong that the whole image would become very dark. Therefore, we only used the lower half for the auto exposure. Under these conditions, the camera images have a low contrast and contain a bloom effect around bright lights. If these are close to the referee, parts of the body might actually disappear, preventing MoveNet from placing keypoints onto them (see image on the right).

-

Lukas Molnar: Visual Referee Detection on Nao Robots for RoboCup SPL 2022. Bachelor Thesis, ETH Zürich, 2022 ↩